Following our previous study on biophysical and spatial sensing, we narrowed down the focus of our research, and constrained a new study to MMI with 2 biosignals only. Namely, we focused on mechanomyogram (MMG) and electromyogram (EMG) from arm muscle gesture. Although there exists research in New Interfaces for Musical Expression (NIME) focused on each of the signals, to the best of our knowledge, the combination of the two has not been investigated in this field. The following questions initiated this study: In which ways to analyse EMG/MMG for complementary information about gestural input? How can musician control separately the two biosignals? Which are the implications at low level sensorimotor system? And how novices can learn to skillfully control the modalities?

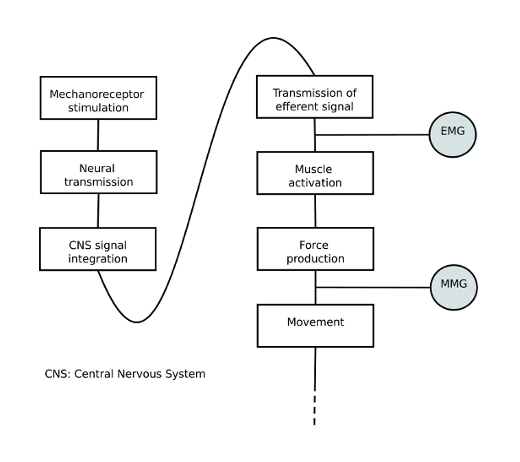

Our interest in conducting a combined study of the two biosignal lies in the fact that they are both produced by muscle contraction, yet report different aspect of the muscle articulation. The EMG is a series of electrical neuron impulses sent by the brain to cause muscle contraction. The MMG is a sound produced by the oscillation of the muscle tissue when it extends and contracts.

In order to learn about differences and similarities of EMG and MMG we looked at the related biomedical literature, and found comparative EMG/MMG studies in the field of sensorimotor system, and kinesis research. We selected aspects of gestural exercise where exists complementary information of MMG/EMG. For the interested reader, further details will be soon available in our related NIME paper.

We used those aspects of muscle contraction to design a small gesture vocabulary to be performed by non-expert players with a bi-modal, biosignal-based interface created for this study. The interface was build by combining two existing separate sensors, namely the Biomuse for the EMG, and the Xth Sense for the MMG signal. Arm bands with EMG and MMG sensors were placed on the forearm. One MMG channel and one EMG channel each were acquired from the users’ dominant arm over the wrist flexors, a muscle group close to the elbow joint that controls finger movement.

In order to train the users with our new musical interface we designed three sound-producing gestures. The EMG and MMG are independently translated into sound, so that every time a user performs one of the gesture, one or the other sound, or a combination of the two, is heard. Users were asked to perform the gestures twice: the first time without any instruction, and the second time with a detailed explanation of how the gesture was supposed to be executed. At the end of the experiment, we interviewed the users about the difficulty of controlling the two sounds. We studied the players’ ability to articulate the two modalities through video and sound recording and analysed their interviews.

The results of the study showed that: 1) MMG/EMG provide richer bandwidth of information on gestural input; 2) MMG/EMG complementary information vary with contraction force, speed, and angle; 3) novices can learn rapidly how to independently control the two modalities.

This findings motivate a further exploration of a MMI approach to biosignal-based musical instruments. Perspective work should look at the further development of our bi-modal interface by designing discrete and continuous multimodal mapping based on EMG/MMG complementary information. Moreover, we should look at custom machine learning methods that could be useful in representing and classifying gesture via muscle combined biodata, and datamining techniques that could help identify meaningful relations among biodata. Such information could then be used to imagine new applications for bodily musical performance, such as an instrument that is aware of its player’s expertise level.

Pictures by Baptiste Caramiaux, and Alessandro Altavilla.