I kicked off my PhD studies by extending the Xth Sense, a new biophysical musical instrument, based on a muscle sound sensor I developed, with spatial and inertial sensors, namely whole-body motion capture (mocap) and accelerometer. The aim: to understand the potential of combined sensor data towards a multimodal control of new musical interface.

An excerpt from our related paper:

“In the field of New Interfaces for Musical Expression (NIME), sensor-based systems capture gesture in live musical performance. In contrast with studio-based music composition, NIME (which began as a workshop at CHI 2001) focuses on real-time performance. Early examples of interactive musical instrument performance that pre-date the NIME conference include the work of Michel Waisvisz and his instrument, The Hands, a set of augmented gloves which captures data from accelerometers, buttons, mercury orientation sensors, and ultrasound distance sensors (developed at STEIM). The use of multiple sensors on one instrument points to complementary modes of interaction with an instrument. However these NIME instruments have for the most part not been developed or studied explicitly from a multimodal interaction perspective.”

Together with team colleagues Baptiste Caramiaux and Atau Tanaka, we designed a study of bodily musical gesture. We recorded and observed the sound-gesture vocabulary of my performance entitled Music for Flesh II.

The data recorded from the gesture were 2 mechanomyogram signals (MMG or muscle sound), mocap data, one 3D vector from the accelerometer, and 3D positions and quaternions of my limbs.

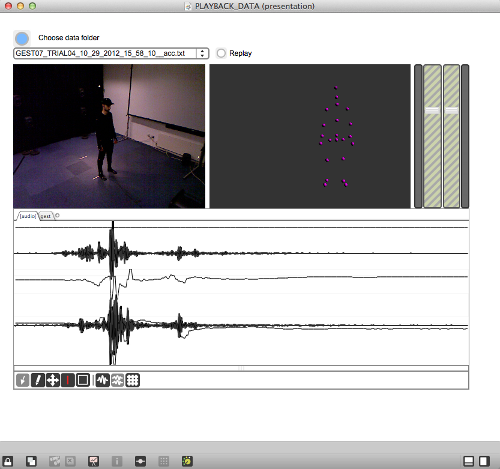

We created a public multimodal dataset and performed a qualitative analysis of those data. By using a custom patch to visualise and compare the different types of data (pictured below) we were able to observe complementarity of different forms in the information collected. We noted 3 types of complementarity: synchronicity, coupling, and correlation. You can find the details of our findings in the related Work in Progress paper, published for the recent TEI conference on Tangible, Embedded, and Embodied Interaction in Barcelona, Spain.

The software we developed to visualise the multimodal dataset.

To summarise, our findings show that different type of sensor data do have complementary aspects; these might depend on the type and sensitivity of sensor, and on the complexity of the gesture. Besides, what might seem a single gesture can be segmented into sections that present different kind of complementarity among the different modalities. This points to the possibility for a performer to engage with richer control of musical interfaces by training on a multimodal control of different types of sensing device; that is, the gestural and biophysical control of musical interfaces based on a combined analysis of different sensor data.